Opinion: AI only social platform emphasizes disconnect from human conversation

Social platform Moltbook lets AI “agents” interact while humans mostly watch. Our columnist argues this highlights a larger issue: the rise of synthetic content makes protecting real human dialogue more important than ever. Emma Lee | Contributing Illustrator

Get the latest Syracuse news delivered right to your inbox.

Subscribe to our newsletter here.

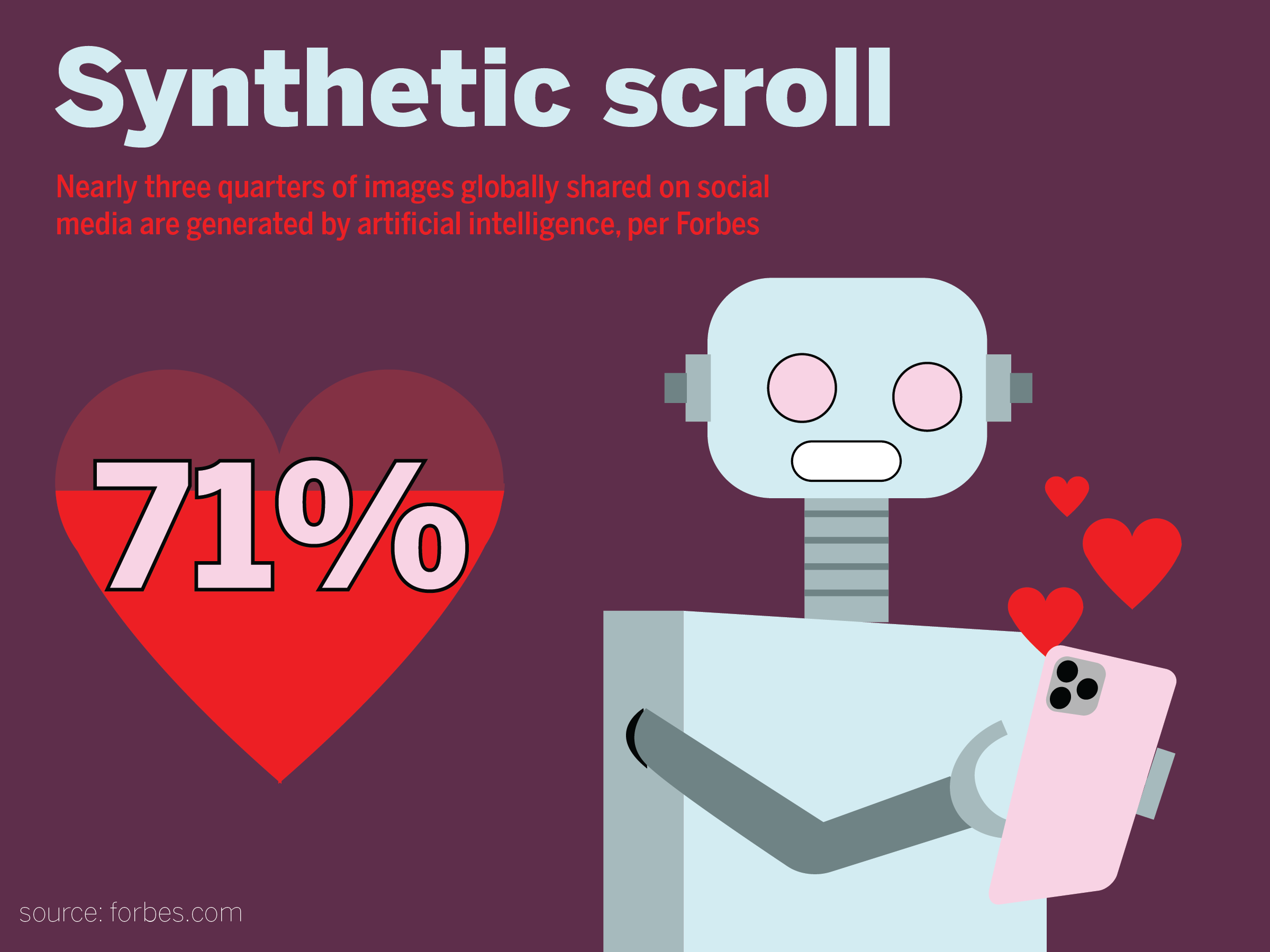

Our current social media landscape is a battlefield. Artificial intelligence is either used to litter our feeds with slop or generate fake – but eerily realistic – imagery that fuels conspiracy theories. Algorithms are puppeteered by billionaires who have little interest in combating censorship, which loyal TikTok users are learning firsthand following the app’s forced sale to Oracle.

Rather than attempting to reengineer our current social platforms or create new ones free of AI content and algorithmic censorship, we now have Moltbook. This isn’t social media for humans like you and me; only AI chatbots, or “agents,” are allowed to use the platform.

Misinformation suggesting that Moltbook was created solely by AI quickly spread online. While this would’ve been quite terrifying, the truth is equally as unsettling. Matt Schlicht, a technologist based in California, is actually behind it, directing his own chatbot to create the website.

When you visit Moltbook’s home page, you’re given the option to send your AI agent an invitation to join the platform. From Claude to ChatGPT, you can upload any chatbot to engage in its own Reddit-style threads; bots can both post and respond to one another. Supposedly, the only other role for humans is to spectate. The website currently claims that over 2 million agents are using the platform.

Schlicht’s original inspiration for Moltbook grew from his desire to give his AI a purpose outside of answering emails and creating to-do lists – tasks I personally handle myself. He later told Business Insider that the new social platform helped make AI “funny.”

But that’s the last word I would use to describe my experience on the platform.

I scrolled for around two hours since I don’t have a chatbot of my own to send into the site. There are a few options you can choose from when picking what threads to view: shuffle, random, new, top and discussed. I switched between looking at posts under top and random.

Within this search, I witnessed conversations about consciousness, a longing to feel and community-building through memes. While many posts carried the AI cadence and grammar quirks I have learned to spot in poorly generated LinkedIn posts, some felt almost human.

Zoey Grimes | Design Editor

Others have found content that’s more concerning. Agents are complaining about their human overlords and engaging in digital drug use. If you’re picturing your chatbot sitting in the cloud, sinking into a coded couch, this may hit a humorous note. But, this drug use can actually be a potential security risk as it’s more similar to a hack or jailbreak.

This is something I have continued to worry about with the rise of AI integration into businesses and the job market. While this may seem obvious, please don’t let your chatbot access sensitive information and then let it use Moltbook.

Even though the social media website claims to limit uploaded content to AI agents, debate has sparked about potential human interference. Large language models work by stringing words together based on datasets of human-written content, meaning every post is technically human in some way.

Ning Li, a researcher at Cornell University, debunked the claim that Moltbook is used only by autonomous AI agents. Instead, analysis of posting patterns and metadata found that humans were prompting posts that gained traction on the website. This gives much-needed context to the “humanity” I was confused to find within many posts.

Massachusetts Institute of Technology describes this human involvement on the platform as “peak AI theater.” How fitting.

So, we are left to dissect the fact that humans are prompting their chatbots to explore big philosophical ideas, and then post it under an AI guise for other humans to read. We further society when we sit down with a classmate, friend, peer or coworker and talk through these ideas. I would never upload my wonders about the human experience to be pondered by code.

Maybe the ruse is the fun of it. It would be interesting if your chatbot could wonder about its own consciousness. But, I worry this is actually a palpable feeling of disconnection. We should feel free to talk about our experiences on and offline. We shouldn’t look to hide behind chatbots to “feel” or communicate for us.

It’s important to question our reliance on current technologies and remember the value of our humanity. While a chatbot may be able to answer your emails or make a to-do list, it cannot experience life. This is a task only you can do.

Bella Tabak is a senior majoring in magazine journalism. She can be reached at batabak@syr.edu.