Opinion: Artificial misinformation interferes with our elections

AI can be a powerful tool to keep up with politics, but only if used correctly. Our columnist argues students must do research that goes beyond AI search engines in order to ensure the validity of information they take in. Jay Cronkrite | Contributing Illustrator

Get the latest Syracuse news delivered right to your inbox.

Subscribe to our newsletter here.

Artificial intelligence is making it extremely challenging to be an informed citizen. As AI-generated content like images, videos and text flood social media, the average person must sort out what’s real — and more often than not, they can’t. When people lose trust in what they see and read, they stop paying attention altogether. This disengagement poses a serious threat to how Americans participate in politics.

Some may argue that AI can boost civic engagement and political efficacy. In theory, AI has the potential to make information more accessible and break down complex political issues. But in practice, these benefits are undermined by its ability to distort reality at an unprecedented scale. Greater access to information means very little if the public can’t determine whether the information they’re consuming is credible.

The core issue is the sheer volume of misinformation AI produces. Generative AI floods the media landscape with content that is misleading at worst and meaningless noise at best. When people are overwhelmed by this constant stream, they struggle to consume politics effectively, often giving up on staying informed entirely.

Even when false content is identified, the speed and scale of its spread make corrections largely ineffective, as the misinformation has often already taken root in public discourse.

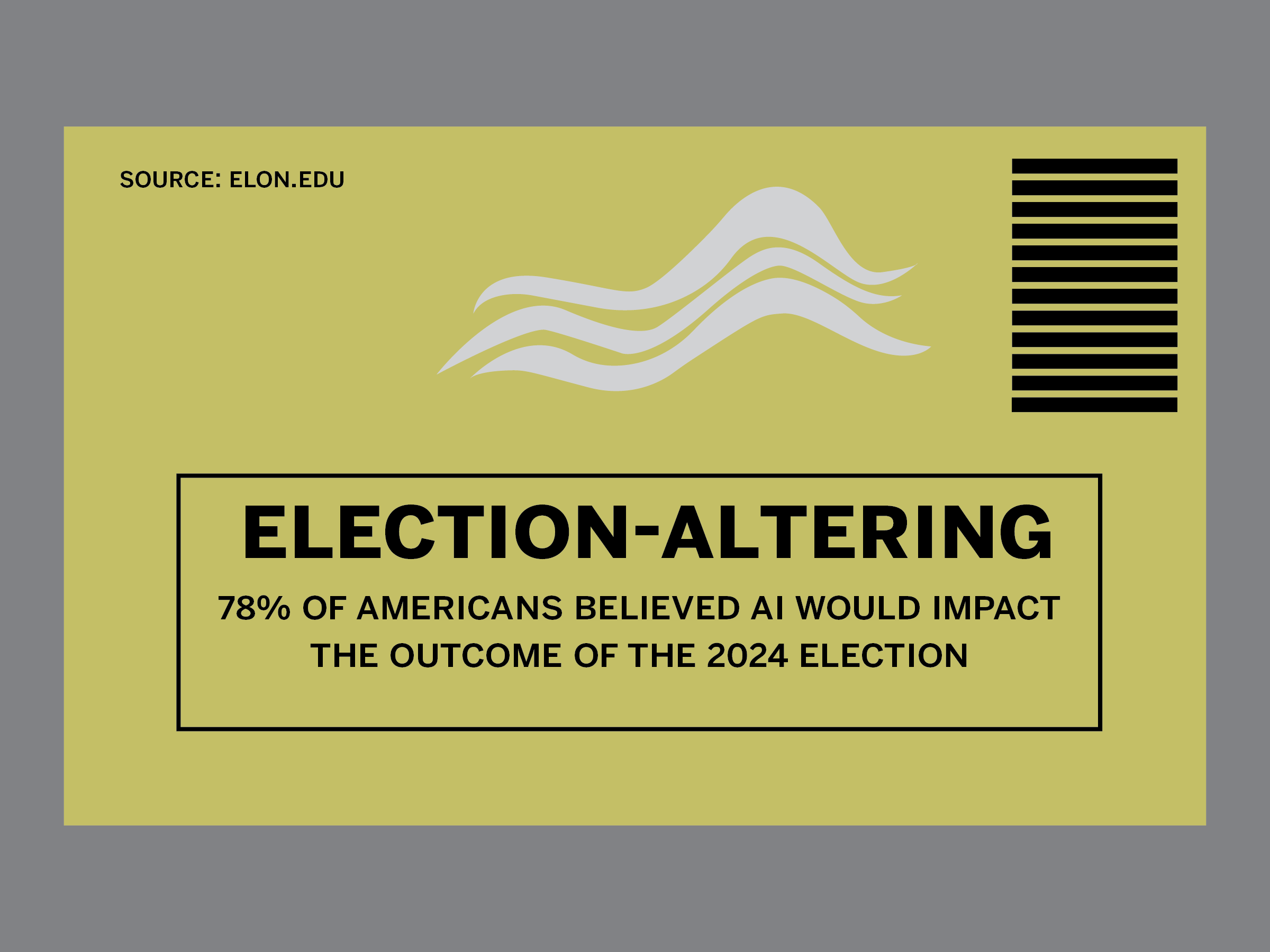

AI is also actively being used to manipulate voters. Experiments during the 2024 presidential race in the United States and 2025 Canadian and Polish presidential races found that AI-driven conversations produced significant shifts in voter preference, more than traditional political advertising, according to a King’s College London research article. If that wasn’t concerning enough, 44% of the persuasive impact was driven largely by information density, not accuracy. In other words, the information only needed to sound convincing to voters, it didn’t need to actually be true.

Just last year, President Donald Trump’s social network Truth Social launched an “anti-woke” artificial intelligence search engine marketed as a more reliable alternative to mainstream platforms. Within weeks, the system designed to boost a specific political narrative began to fracture, contradicting its founders’ claims on issues such as election integrity and economic policy.

When these inconsistencies surfaced, company insiders said changes would be made to better align the AI with the president’s policies, revealing how easily the tool can be leveraged to support an agenda.

What makes this especially difficult to counter is that manipulation doesn’t require a sophisticated operation. A local candidate in a tight race could deploy an AI system to engage voters on social media, learn which issues they care about and reframe its argument accordingly, all from a single laptop with no clear attribution trail and at a minimal cost.

Efforts to influence voters that once required substantial resources are now accessible to almost anyone with an agenda, making it hard to find unbiased sources of information.

The very reason why people trust AI is what makes it dangerous. Research from Stanford Graduate School of Business found that people are more open to hearing opposing political messages from AI, assuming it’s more informed and objective. That instinct makes sense on the surface: When a person tries to convince us of their position, we often dismiss them as biased or self-interested.

Sophia Burke | Digital Design Director

The problem is that AI is neither. Its output reflects the data it’s trained on and the incentives of the companies that deploy it. If people are more receptive to AI-generated messages than they are to more reliable sources, misinformation will spread like wildfire.

The problem isn’t just AI users; it’s the tools themselves. Research shows that generative AI can be persuasive and influence opinions through personalized conversation. For instance, Grok, X’s AI chatbot, has few content limitations and amplifies harmful content rather than providing neutral responses. Despite Grok’s well-documented inaccuracies and problematic outputs, U.S. federal agencies have continued to integrate it into operations with minimal public explanation.

The broader concern is that AI systems are often optimized for engagement and responsiveness rather than accuracy. A system designed to keep users interacting is not a system designed to protect the integrity of public discourse. It’s especially worrisome that bureaucratic agencies are using these tools, effectively encouraging their adoption among citizens.

All of this creates a political environment where someone without the time or expertise to fact check everything is at a structural disadvantage. Democracy relies on an informed public, yet AI is making that harder to achieve by design.

The long-term consequences of this may extend beyond misinformation to a deeper erosion of public trust, not just in media, but in institutions and democratic practices themselves.

Syracuse University students, like college students everywhere, aren’t insulated from these dynamics. We are immersed in them, especially as AI becomes increasingly embedded in our education. Our political views, social awareness and even our sense of reality are increasingly shaped by algorithmic feeds and AI-mediated content. This makes critical thinking and media literacy no longer optional skills but civic responsibilities.

Students need to be more intentional about verifying sources and resisting the urge to passively accept information simply because it seems authoritative or widely shared. Universities often frame civic engagement as voting or activism, but in the age of AI, it also means actively defending the quality of the information environment we navigate every day.

Emma Donohue is a sophomore studying political science and citizenship and civic engagement. She can be reached at efdonohu@syr.edu.